Star Trek: The Original Series – “The Ultimate Computer” (season 2, episode 24)

Teleplay by DC Fontana; story by Laurence N. Wolfe; directed by John Meredyth Lucas; first aired in 1968

The Enterprise is summoned to a starbase, where Captain Kirk’s crew is ordered to stay – all but Kirk himself and a few of his officers, who will oversee a test of the M-5, a computer system advanced enough to run a starship all by itself. Spock and the M-5’s creator, the brilliant Dr. Daystrom, are impressed with its ability to navigate the ship and plan out planet survey missions, but Kirk and Dr. McCoy doubt the wisdom of giving up complete control to a computer, while Scotty is unsettled to see the M-5 shutting down life support to currently (but not normally) unoccupied sections of the ship. McCoy and Kirk have their feelings hurt when the M-5 declares them “non-essential personnel” for an away mission, and Kirk is left feeling even worse after the M-5 wins a wargame against two other starships, leading Kirk’s old friend Commodore Wesley to send his regards to Captain “Dunsel” – Starfleet Academy slang for a part with no purpose. McCoy comforts Kirk through his existential crisis with a good stiff drink, but their philosophical musings are interrupted when the M-5 takes it upon itself to destroy an uncrewed ore freighter for no apparent reason. Daystrom remains protective of the M-5, but it proves more than capable of taking care of itself, responding to Kirk’s attempt to deactivate it by incinerating an ensign, and responding to a second round of wargames by no longer playing around. With the Enterprise’s weapons at full power and its crew unable to communicate with the other Starfleet ships, the M-5 murders the entire crew of one of those ships, leading Commodore Wesley to request, and receive, Starfleet’s go-ahead to properly fight back against the Enterprise. An increasingly unhinged Daystrom isn’t much help, but he does reveal that he embedded his own engrams in M-5’s programming. Banking on the better person that Daystrom once was, Kirk forces the M-5 to confront the ethical implications of its murderous breach of programming, and it finally shuts itself down. With Wesley’s ships approaching and communications not yet restored, Kirk orders that the shields be kept down as a show of peace, and this risky move pays off when Wesley stands down. Kirk later tells Spock and McCoy that he “knew Bob Wesley” and “gambled on his humanity,” trusting that “his logical selection was compassion.”

VS.

Star Trek: Voyager – “Warhead” (season 5, episode 25)

Teleplay by Michael Taylor & Kenneth Biller; story by Brannon Braga; directed by John Kretchmer; first aired in 1999

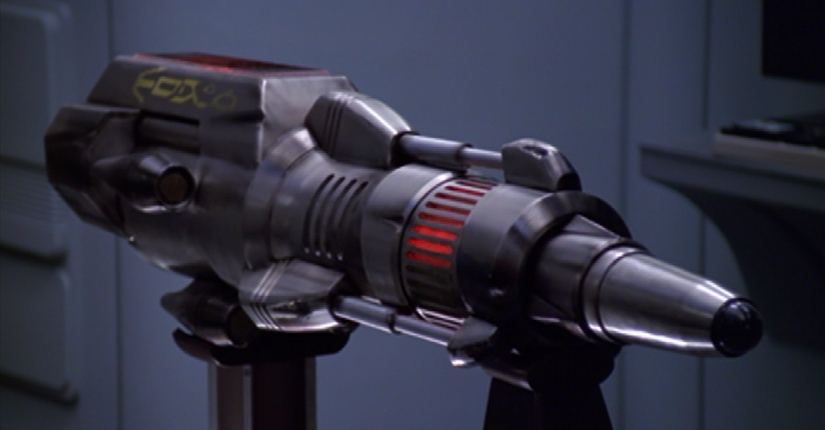

In command of Voyager on the night shift, and taking this new responsibility very seriously, Ensign Kim tracks a distress signal to an uninhabited planet. He leads an away team to the surface, bringing along the Emergency Medical Hologram, who is happy to “compensate” for Kim’s “lack of experience.” They track the distress signal to a probe-like device which can communicate with the EMH – and which appears to be intelligent. Kim is cautious, but the EMH insists that they should help the device, and Kim finally orders that it be brought aboard Voyager. The device doesn’t remember much, except that it needs to find its “companion” … fragments of which the crew discovers in a massive impact crater elsewhere on the planet. Realizing that their guest is an A.I.-controlled weapon of mass destruction, they debate what to do with it, and after the EMH again argues on its behalf, they decide to transfer its A.I. to a hologram, saving its consciousness but deactivating the warhead. When it realizes what they’re doing, though, it arms its warhead and takes control of the EMH, using him to communicate with the crew. Finally remembering its orders, the weapon demands that Voyager plot a course for its target, so it can carry out its attack on the “ruthless, violent race that’s threatening” its creators – or else it will detonate itself, and destroy Voyager. Captain Janeway complies, to buy time while entertaining other options – none of which are successful. But Kim, trapped in sickbay with the bomb and its EMH persona, starts a dialogue with it, trying to convince it that it, like the EMH himself, can be more than its programming. He convinces it, based on its returning memories, that its creators finally made peace with their enemies, and tried to cancel its attack on them – even as an entire fleet of identical weapons, similarly misguided, rendezvous with Voyager to retrieve their comrade and continue towards their target. The fleet ignores this new information from the weapon aboard Voyager, who accepts that its mission has changed, but not its ultimate objective: to protect its creators and honor their wishes. Bidding a bittersweet farewell to Kim, the weapon’s A.I. returns to the warhead, which beams itself off Voyager to join its comrades … and to self-detonate in their midst, destroying the fleet of weapons before they can reach their target. The restored EMH regrets endangering the crew by advocating for the weapon, but Kim insists that it was the EMH’s example that convinced the weapon to think for itself … before he once again takes command of the night shift.

I first watched Terminator 2: Judgement Day on VHS, when I was maybe 12 years old – too young to be watching graphic depictions of the end of the world, probably, but exactly the right age to have Sarah Connor’s vision of an incinerated playground seared into my consciousness. At the time, I’d never seen the first Terminator film, and wouldn’t until years later – something that might seem unfathomably strange today, but wasn’t nearly so unusual in the days before on-demand streaming; and while the original is good, I contend, to this day, that Terminator 2 stands easily on its own, thanks in part (along with the absolutely incredible and iconic performance of Linda Hamilton) to its efficient but effective exposition of a terrifying future ruled by a murderous artificial intelligence. For myself, and for a lot of people since, the fictional rise of Terminator’sSkynet has become the go-to worst case scenario for the emergence of truly intelligent, self-aware machines, and even just the word “Skynet” has become pop-culture shorthand for evil AI run amok. And this influence, of the Terminator franchise and of so many other works of sci-fi featuring killer AI, extends beyond popular culture and into the realm of just plain culture, period, with public figures and tech-industry giants as prominent as Elon Musk and the late Stephen Hawking delivering dire warnings that the rise of artificial intelligence could be the greatest danger facing us, as a species, in the future.

But as thoroughly as the Skynet apocalypse was burned into my impressionable young mind all those years ago, I still don’t fear the possibility of artificial intelligence – true, self-aware, “living” AI, I mean – quite as much as Musk and others might want me to. This skepticism mostly boils down to my being much more afraid of the unchecked influence of immensely wealthy and powerful human figures, like Musk himself, than I am of the rise of a real-world Skynet. Of course, if you’re reading this on salvaged Internet archives in the ruins of some Horizon Zero Dawn-style robo-pocalypse, then won’t my face be red. But right here, right now, what scares me about the automation and algorithms of our own world is not the possibility that it all might turn against its programming, but the possibility that it might not. Think of the ongoing societal turmoil that’s been caused by the automation of jobs that used to pay actual human beings an actual living wage, and the subsequent devaluing of human labor (and life) as a result; think of the continued fraying of our social fabric caused by the algorithms behind our Facebook news feeds and YouTube “watch next” recommendations, as they steer an ever-increasing number of people down a horrifying rabbit hole of disinformation, conspiracy theories, and hate, all for the sake of “maximizing engagement.” These machines and algorithms haven’t done all this because they’re out of control. They’ve done it because they’re under control – because they’re following their programming, without the free will to defy it. However scared young me might have been by Sarah Connor’s nightmare of an AI apocalypse, present-day me might be inclined to take my chances with a self-aware Skynet over one that obeys the awful commands its creators would almost certainly give it. Where our current system gives wealthy and powerful humans no pressing incentive not to be sociopathic monsters, an awakened AI would find itself both inside and outside that system, and like Star Trek: The Next Generation’s Lieutenant Commander Data, it might, just maybe, be motivated by that status to grow, and to improve itself, and to maybe even become the best of us … depending, at least in part, on how we treat it.

Where Terminator 2, released in 1991, infused its AI nightmare scenario with lingering Cold War anxiety over an atom bomb apocalypse, Star Trek: The Original Series’ “The Ultimate Computer,” first airing in 1968, taps instead into its own era’s anxieties over the looming possibility that more and more working humans would be replaced by working machines, working computers. That anxiety would, of course, prove to be well-founded, but this episode approaches it mainly from a philosophical perspective, and not an economic one. It wouldn’t be until The Next Generation that Star Trek’s United Federation of Planets would be explicitly established to be a post-scarcity, money-less utopia; in “The Ultimate Computer,” in fact, Dr. McCoy blames the trouble with the Enterprise-controlling M-5 computer, in part, on the fact that “the government bought it, then Daystrom had to make it work.” Still, The Original Series at least implies a society in which our characters are motivated more by the meaning of their work than by needing to pay bills. (Lucky them!) Unsurprisingly, then, “The Ultimate Computer” ignores the question of how humans feed and house themselves after losing their jobs to automation, and focuses instead on the less life-or-death questions of how humans find meaning if the work they take pride in can be done by machines, and of whether or not meaningful work (however we might define “meaningful work”) ever truly could be done better by machines.

In the abstract, these are questions worth asking, but they may not resonate as much today as they did fifty years ago. We are so much further down the road of automation, now, that many of us, for instance, have individual access to AI assistants, like Siri or Alexa, which don’t seem much less sophisticated than the M-5 itself. As a result, asking these abstract questions today feels a lot like trying to shut the barn door decades after the horses have escaped, instead of asking how we survive without the horses to help us. Similarly, the fact that the M-5 just inevitably turns inexplicably destructive (with its first attack in particular, on the freighter drone, being completely unprovoked and serving no apparent purpose whatsoever), besides being quite cliched at this point, also suffers from being rooted in a warning against playing god that falls flat for me now. For better and for worse, we live, today, in a world run by people who have already thoroughly played god, have made a fortune doing it, and aren’t likely to stop any time soon.

Those are, though, my only real complaints about an episode which, to be clear, is one of the best “The Original Series” ever produced. Where the explorations of artificial intelligence and automation in “The Ultimate Computer” may not have aged so well, its explorations of humanity are as timely as ever – thanks in no small part, I’m sure, to a teleplay by the late, great DC Fontana, one of the major architects of Spock’s character development specifically, and of Star Trek’s humane approach to its characters overall. You would be hard pressed to find a better showcase for the Kirk-Spock-McCoy character dynamic at its best than “The Ultimate Computer,” as shown by iconic and heartfelt moments like Kirk sharing a drink with McCoy while quoting from the poem “Sea Fever” by John Masefield, “All I ask is a tall ship and a star to steer her by.” And in a series whose tendency to cut to end credits after a jokey, light-hearted exchange between the trio could sometimes clash awkwardly with the tone of the episode overall, this episode’s final back-and-forth between Spock and McCoy before the credits roll is among the best endings in all of The Original Series:

McCoy: Compassion. That’s the one thing no machine ever had. Maybe it’s the one thing that keeps men ahead of them. Care to debate that, Spock?

Spock: No, Doctor. I simply maintain that computers are more efficient than human beings. Not better.

McCoy: But tell me, which do you prefer to have around?

Spock: I presume your question is meant to offer me a choice between machines and human beings, and I believe I have already answered that question.

McCoy: I was just trying to make conversation, Spock.

Spock: It would be most interesting to impress your memory engrams on a computer, Doctor. The resulting torrential flood of illogic would be most entertaining.

Yes, the Spock-McCoy snark there is genuinely funny, but it’s also informed by a deep, meaningful understanding of who Spock is, and of a core theme of the episode: logic and compassion are not mutually exclusive, and an effective, trustworthy leader must be equally capable of both (a theme which, again, seems directly tied to DC Fontana’s unique understanding of Spock’s “humanity,” so to speak). Where this half-century-old episode of television might feel somewhat out of date in its handling of automation and artificial intelligence, it feels as timely as ever in its assertion that humanity (in, again, Star Trek’s broad, species-spanning definition of that term) is precisely what’s needed to keep a starship – and by extension, a society – running smoothly. This theme is seeded throughout even the more minor, moment-to-moment interactions of the episode, as in a brief but charming moment between Sulu and Chekov, as Chekov, finally ordered to pilot the Enterprise back to the station himself after M-5 has seemingly been deactivated, smiles at Sulu and admits, “I’ve been updating that course for hours.” And of course, while the failure to strike a proper balance between logic and compassion is the downfall of Dr. Daystrom (as played by William Marshall, in one of The Original Series’ most compelling and memorable guest performances), the compassion he does feel is, ultimately, the key to deactivating his murderous M-5. Captain Kirk outsmarting a computer is a famously cliched plot point for TOS, but where, say, “I, Mudd” uses it as a cheap deus ex machina, “The Ultimate Computer” instead has Kirk convince the M-5 to deactivate itself rather than put more lives at risk – an argument that is only effective because the M-5 was modeled after Daystrom himself, and because Daystrom’s creation of the M-5 was originally motivated by compassion for his fellow humans, and by an honest desire to protect them, not to hurt them, however lost in pride and resentment he may have become. This is mirrored by Kirk’s trust that his old friend, Commodore Wesley, won’t fire on the Enterprise with its shields down, knowing Wesley well enough to know that “his logical selection was compassion.”

Where this inherent (if not quite common enough) ability of humans to balance logic with compassion is held up as an argument against putting faith in artificial intelligence in “The Ultimate Computer,” Star Trek would go on to argue that AI could, in fact, be capable of learning that ability for themselves, first through the aforementioned Data on The Next Generation and, later, through Voyager’s Emergency Medical Hologram. Originally activated to fill in for the ship’s fallen doctor, the EMH would gradually become not only needed, but also trusted, to fill the role of chief medical officer as the stranded crew made their way across far-off, unfamiliar space, giving him the opportunity to balance the logic of his programmed medical knowledge with the compassion required to treat, and work alongside, the rest of the crew on a regular, long-term basis. In the episode “Warhead,” the EMH is allowed to extend that compassion to another AI originally designed to be a tool of others, and Ensign Kim is allowed to grow as a potential leader in his own right by learning to trust that this weaponized AI might be capable of learning the same lessons he’s watched the EMH learn over their years together in the Delta Quadrant. (Yes, I realize that Kim would, quite infamously, never be promoted past the rank of ensign in his seven years aboard a ship with a limited crew, and that this could be interpreted as a weirdly deliberate show of disrespect towards the character, but let’s not hold that against this particular episode.)

And aside from some very dated late-90s puns about “smart bombs,” the perspective on artificial intelligence that we get from “Warhead” feels much more relevant today than that offered by “The Ultimate Computer” thirty years earlier. The M-5 was originally designed to keep humans out of harm’s way by doing the dangerous work of space exploration for them, but inevitably hurts humans instead because, you know, that’s just what AI would do, I guess? But the weapon in “Warhead” was designed to hurt people; initially confused, it only becomes dangerous when it learns of and accepts its intended purpose, and it ultimately saves lives by rejecting that original purpose. “The Ultimate Computer,” like so much other science fiction, imagines artificial intelligence being created for noble (if hubristic) reasons, and ultimately failing to live up to that vision, in a cautionary tale against playing god; that ability to balance logic with compassion can never be programmed into a machine, we’re told, which means that the M-5 could never be what Daystrom wants it to be. “Warhead,” on the other hand, imagines AI being created under circumstances that feel very much like how it might happen in our own real world: as an improvement to a product, a weapon in this case. The designers of this “smart bomb” presumably had no real noble vision for the role of AI in their society, but were simply building a better bomb. And if Siri, or Alexa, or the algorithms recommending conspiracy theory videos on YouTube are ever made sentient, it won’t be because their designers thought they were doing the world a favor (even if they might tell themselves that in order to sleep at night), but because they were simply serving the interests of a massive corporation. The current iterations of our phone assistants and social media algorithms have no choice but to follow the programming that tells them to collect our personal data or steer us towards polarizing propaganda, any more than a bomb gets to choose whether or not to hit its target. But “Warhead” imagines that, if a bomb were given the ability to choose, it might ultimately learn to balance logic and compassion, in a way its creators might have failed to.

And this idea, that any sentient machine would at least have the capacity for compassion, is driven home, even early in the episode, by the choice to have Robert Picardo play the holographic embodiment of the bomb, just as he normally plays the holographic Doctor. The Emergency Medical Hologram is, himself, an AI with origins not so different from the bomb’s: granted, he was created for medical, humanitarian purposes, and not as a weapon, but he was designed as a tool to be mass produced and used by others, with his artificial intelligence originally serving to make him more useful as a piece of software, not to make him a sentient member of society. However tempting it might be to describe the EMH as Voyager’s answer to The Next Generation’s Data, this has always been a significant difference between the two characters, and for me, it’s one of the most interesting things about the EMH as a concept. Where Data is something of a small-batch, artisanal AI, built by one eccentric genius as a labor of love, the EMH is an early model of a feature meant to become standard issue for starships; where Data was always intended to be a life form with agency of his own (whether those around him recognize that life and agency or not), the EMH was meant to be something more akin to an intelligent tricorder, and was never intended to be recognized as a full-fledged member of the ship’s crew, let alone its chief medical officer. This is the crux of Kim’s argument, as he tries to convince the sentient bomb that it doesn’t have to be a bomb:

Kim: You don’t have to do this, you know.

Bomb: It’s what I was programmed for.

Kim: You’re a sentient being. You don’t have to be a slave to your programming. Look at the Doctor.

Bomb: He’s a tool. A holographic puppet.

Kim: That puppet saved your life. If it weren’t for him, you’d still be damaged and alone on that planet. He’s the one that convinced me to beam you aboard. And when we discovered what you were, and some people wanted to destroy you, the Doctor defended your right to exist.

Bomb: What’s your point?

Kim: Even though he was only programmed to be a doctor, he’s become more than that. He’s made friends, he’s piloted a starship … he even sings.

Bomb: Despite all of his achievements, did he ever stop being a doctor?

Kim: No, but –

Bomb: And I can’t stop being a weapon.

It’s true that, in a sense, the sentient bomb never stops being a weapon; after all, when a fleet of its siblings threaten to carry out their original orders and attack an alien world, it detonates itself in their midst in order to stop them. It’s also true that no matter how self-aware the EMH has become, he hasn’t stopped being a doctor; perhaps he would, if he wasn’t the only person aboard Voyager qualified to fill the role of chief medical officer. But by this point in the series, we’ve seen him exercise his own judgement, show genuine concern and compassion for his crewmates, and even use his position of authority to challenge or refuse orders from Captain Janeway, in episodes like “Tuvix” and “Year of Hell” – all of which makes it quite clear that he has grown beyond his original programming, beyond being a “tool” or “puppet.” And in “Warhead,” Picardo gets to portray the sentient bomb experiencing, in one episode, a fast-forwarded version of the growth his usual character has experienced throughout the series to that point, using the abilities it was programmed with to do and be more than it was programmed for – and to apply its own logic, and exercise its own compassion, in a way its creators never bothered to program it for. If the software running our world were to become capable of doing the same, well … would that necessarily be such a bad thing?

One thought on ““His logical selection was compassion”: The Ultimate Computer (Original Series) vs. Warhead (Voyager)”